One of the coolest features in the RC2 release for RavenDB is the automatic setup, in particular, how we managed to get a completely automated secured setup with minimal amount of fuss on the user’s end.

One of the coolest features in the RC2 release for RavenDB is the automatic setup, in particular, how we managed to get a completely automated secured setup with minimal amount of fuss on the user’s end.

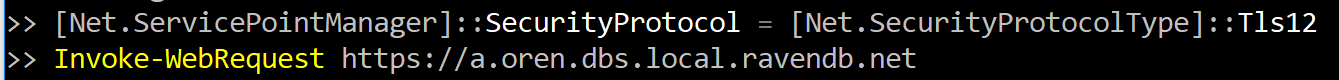

You can watch the whole thing from start to finish, it takes about 3 minutes to go through the process (if you aren’t also explaining what you are doing) and you have a fully secured cluster talking to each other over secured TLS 1.2 channels. This was made harder because we are actually running with trusted certificates. This was a hard requirement, because we use the RavenDB Studio to manage the server, and that is a web application hosted on RavenDB itself. As such, it is subject to all the usual rules of browser based applications, including scary warnings and inability to act if the certificate isn’t valid and trusted.

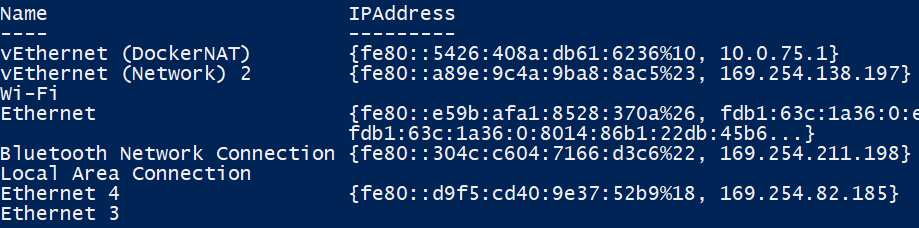

In many cases, this lead people to chose to use HTTP. Because at least with that model, you don’t have to deal with all the hassle. Consider the problem. Unlike a website, that has (at least conceptually) a single deployment, RavenDB is actually deployed on customer sites and is running on anything from local developer machines to cloud servers. In many cases, it is hidden behind multiple layers of firewalls, routers and internal networks. Users may chose to run it in any number of strange and wonderful configurations, and it is our job to support all of them.

In such a situation, defaulting to HTTP only make things easy. Mostly because things work. Using HTTPS require that we’ll use a certificate. We can obviously use a self signed certificate, and have the following shown to the user on the first access to the website:

As you can imagine, this is not going to inspire confidence with users. In fact, I can think of few other ways to ensure the shortest “download to recycle bin” path. Now, we could ask the administrator to generate a certificate an ensure that this certificate is trusted. And that would work, if we could assume that there is an administrator. I think that asking a developer that isn’t well versed in security practices to do that is likely to result in an even shorter “this is waste of my time” reaction than the unsecured warning option.

We considered the option of installing a (locally generated) root certificate and generating a certificate from that. This would work, but only on the local machine, and RavenDB is, by nature, a distributed database. So that would make for a great demo, but it would cause a great deal of hardships down the line. Exactly the kind of feature and behavior that we don’t want. And even if we generate the root certificate locally and throw it away immediately afterward, the idea still bothered me greatly, so that was something that we considered only in times of great depression.

So, to sum it all up, we need a way to generate a valid certificate for a random server, likely running in a protected network, inaccessible from the outside (as in, pretty much all corporate / home networks these days). We need to do without requiring the user to do things like setup dynamic DNS, port forwarding in router or generating their own certificates. We also need to to be fast enough that we can do that as part of the setup process. Anything that would require a few hours / days is out of the question.

We looked into what it would take to generate our own trusted SSL certificates. This is actually easily possible, but the cost is prohibitive, given that we wanted to allow this for free users as well, and all the options we got always had a per generated certificate cost associated with it.

Let’s Encrypt is the answer for HTTPS certificate generation on the public web, but the vast majority all of our deployments are likely to be inside the firewall, so we can’t verify a certificate using Let’s Encrypt. Furthermore, doing so will require users to define and manage DNS settings as part of the deployment of RavenDB. That is something that we wanted to avoid.

This might require some explanation. The setup process that I’m talking about is not just to setup a production instance. We consider any installation of RavenDB to be worth a production grade setup. This is a lesson from the database ransomware tales. I see no reason why we should learn this lesson again on the backs of our users, so a high priority was given to making sure that the default install mode is also the secure and proper one.

All the options that are ruled out in this post (provide your own certificate, setup DNS, etc) are entirely possible (and quite easily) with RavenDB, if an admin so chose, and we expect that many will want to setup RavenDB in a manner that fits their organization policies. But here we are talkingh about the base line (yes, dear) install and we want to make it as simple and straightforward as we possibly can.

There is another problem with Let’s Encrypt for our situation, we need to generate a lot of certificates, significantly more than the default rate limit that Let’s Encrypt provides. Luckily, they provide a way to request an extension to this rate limit, which is exactly what we did. Once this was granted, we were almost there.

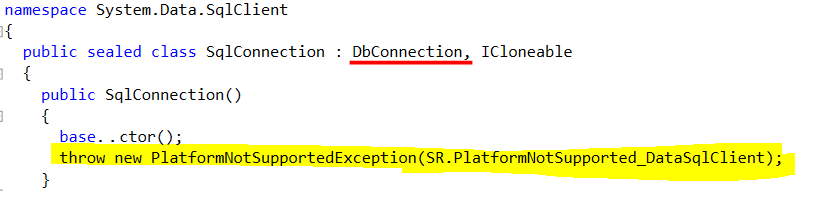

The way RavenDB generates certificates as part of the setup process is a bit involved. We can’t just generate any old hostname, we need to provide proof to Let’s Encrypt that we own the hostname in question. For that matter, who is the we in question? I don’t want to be exposed to all the certificates that are generated for the RavenDB instances out there. That is not a good way to handle security.

The way RavenDB generates certificates as part of the setup process is a bit involved. We can’t just generate any old hostname, we need to provide proof to Let’s Encrypt that we own the hostname in question. For that matter, who is the we in question? I don’t want to be exposed to all the certificates that are generated for the RavenDB instances out there. That is not a good way to handle security.

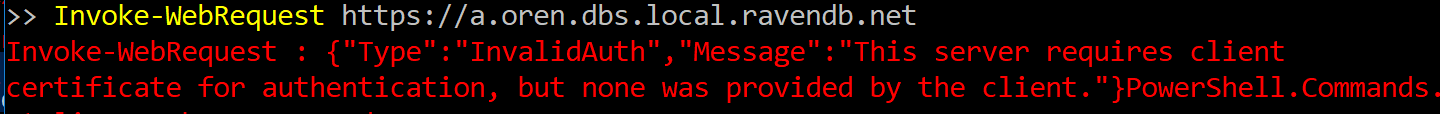

The key for the whole operation is the following domain name: dbs.local.ravendb.net

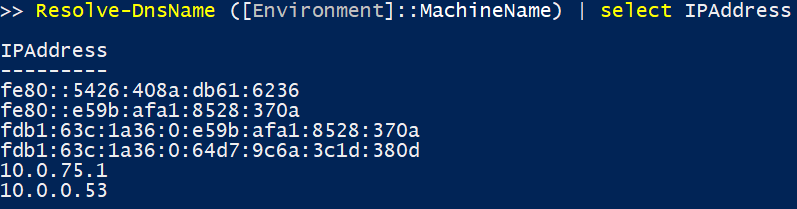

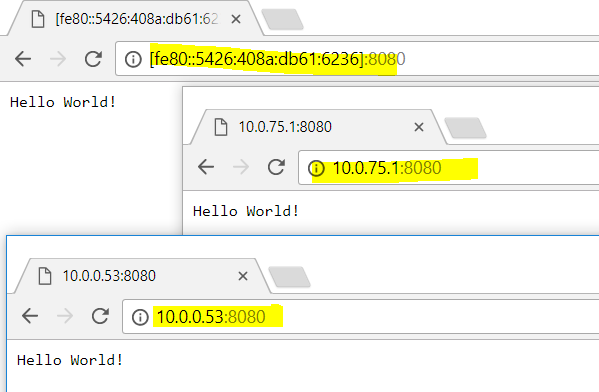

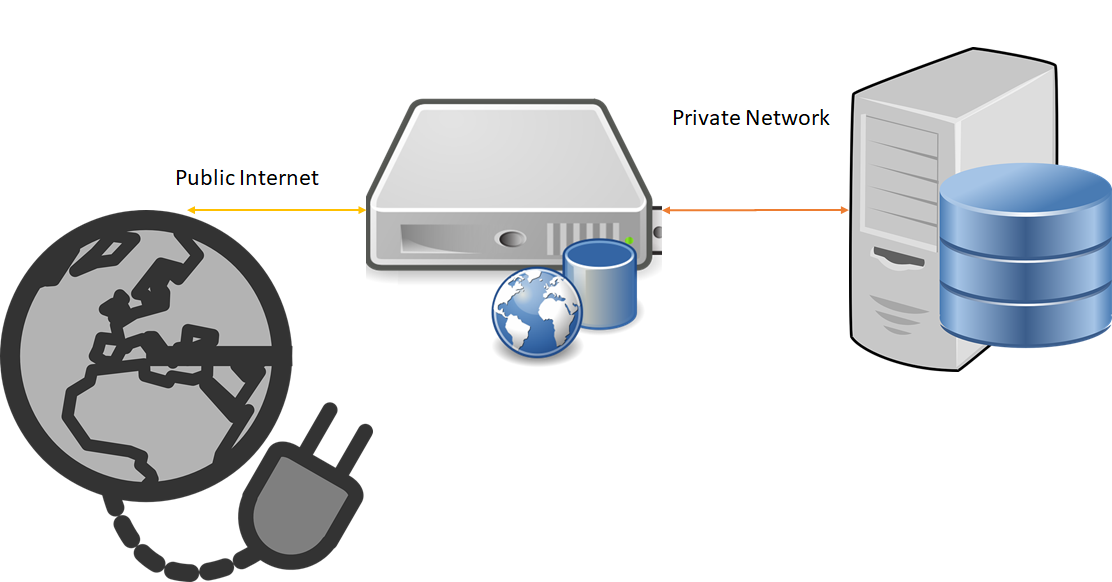

During setup, the user will register a subdomain under that, such as arava.dbs.local.ravendb.net. We ensure that only a single user can claim each domain. Once they have done that, they let RavenDB what IP address they want to run on. This can be a public IP, exposed on the internet, a private one (such as 192.168.0.28) or even a loopback device (127.0.0.1).

The local server, running on the user’s machine then initiates a challenge to Let’s Encrypt for the hostname in question. With the answer to the challenge, the local server then call to api.ravendb.net. This is our own service, running on the cloud. The purpose of this service is to validate that the user “owns” the domain in question and to update the DNS records to match the Let’s Encrypt challenge.

The local server can then go to Let’s Encrypt and ask them to complete the process and generate the certificate for the server. At no point do we need to have the certificate go through our own servers, it is all handled on the client machine. There is another thing that is happening here. Alongside the DNS challenge, we also update the domain the user chose to point to the IP they are going to be hosted at. This means that the global DNS network will point to your database. This is important, because we need the hostname that you’ll use to talk to RavenDB to match the hostname on the certificate.

Obviously, RavenDB will also make sure to refresh the Let’s Encrypt certificate on a timely basis.

The entire process is seamless and quite amazing when you see it. Especially because even developers might not realize just how much goes on under the cover and how much pain was taken away from them.

We run into a few issues along the way and Let’s Encrypt support has been quite wonderful in this regard, including deploying a code fix that allowed us to make the time for RC2 with the full feature in place.

There are still issues if you are running on a completely isolated network, and some DNS configurations can cause issues, but we typically detect and give a good warning about that (allowing you to switch to 8.8.8.8 as a good workaround for most such issues). The important thing is that we achieve the main goal, seamless and easy setup with the highest level of security.